Jim Hannan (@HoBHannan), Principal Architect

Part 4 – vSphere 5 vMotion

In Part 4 of vSphere 5 Advantages for a VBCA, I turn my focus to vMotion and it’s enhancements in vSphere 5. VMware vMotion offers the ability to live-migrate virtual machines from one ESX host to another.

Looking Back

It amazes to me to think how long vMotion has been around. Most of us remember our first demo of a vMotion migration. vMotion, originally introduced in ESX 2.0 with vCenter 1.0, offered a feature unlike any in the industry. For many organizations, it was the quintessential reason to virtualize their workloads.

Care to guess what interactive application was used by VMware to demo the first migrations?

Answer: Pinball

How vMotion Works

The majority of the work performed by vMotion is copying the virtual machine memory over to the destination ESX host. This memory migration can be broken down into the following 3 phases:

Phase 1: Guest Trace Phase

Consider this an accounting step. vMotion needs to account for each memory page in the virtual machine and any changes to pages during a vMotion operation. Traces are placed on each memory page in order to track guest memory pages; this allows for vMotion to track memory modifications during vMotion.

Phase 2: Pre-copy Phase

The pre-copy phase is done in iterations. First, a full sweep and copy of all memory blocks is done. The second iteration only copies the blocks that have changed since the first iteration. From here, additional iterations may be run depending on the ability of previous iterations to keep up with the changed blocks.

Phase 3: Switchover Phase

The switchover phase is the final step in the migration. This is the cutover step, which quiesce the source VM and places the destination VM in a resume state. This step typically occurs in less than one second.

All three of these phases are greatly optimized with the vSphere 5 vMotion enhancements.

Performance Improvements

Multiple network adapter: VMkernel can transparently load balance a single vMotion across multiple network adapters.

Round-trip latency limit for vMotion networks has increased from 5 milliseconds to 10 milliseconds. This, in House of Brick’s opinion, is a future looking enhancement that will natively allow for long-distance vMotion.

Improved memory tracing (Phase 1): According to VMware documentation, the tracing mechanism has been optimized to place traces faster.

Improvements to allow the vMotion to effectively use the full bandwidth of a 10GbE interface. This actually may come as a surprise to some people, but many applications struggle with this. It’s mostly due to the CPU overhead TCP/IP introduced into the network stack. From my observations, this is no longer an issue with vMotion.

Stun During Page Send (SDPS): Ensures the vMotion will be successful during memory block change over. This new feature should be viewed with caution for VBCA applications that are latency sensitive. Let’s take a look at what SDPS does and what it means for your VBCA in the next section.

SDPS for VBCAs

In some rare cases, a VM will have memory changes that occur faster than the vMotion iteration can keep up with. In these cases, SDPS will slow the virtual machine down enough to allow the vMotion to complete the migration. This “slow down” could cause unwanted application latency. In the case of Oracle RAC and its interconnect, this could cause node eviction.

The SDPS feature can be disabled, but this is not recommended by HoB. Instead, we recommend that vSphere administrators leave this feature enabled and build a vMotion network capable of moving memory blocks fast enough to prevent an overrun.

Test Results

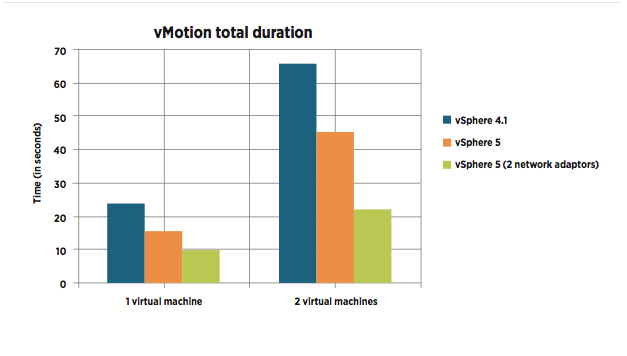

VMware recently benchmarked vSphere 5 vMotion against vSphere 4.1. One of the many tests VMware ran to compare vMotion performance was a database workload test. Using a SQL Server running the open source DVD Store Version 2 (DS2), VMware generated a RDBMS workload that ran during the vMotions. As you can see in the graph below, one test running with 2 NICs for vMotion was approximately 42% faster than vSphere 4.1 with one NIC.

References:

1. VMware vSphere vMotion Architecture, Performance and Best Practices in VMware vSphere 5

2. vMotion, the story and confessions