Dave Welch (@OraVBCA), CTO and Chief Evangelist

VAPP2389 Use Storage Virtualization to Protect Business Critical Functionality with vSphere and Oracle Extended Distance Clusters – 1:00PM

Presented by Marlin McNeal – Yucca Group, and Sudhir Balasubramania – VMware.

Sudhir is one of the four authors on Charles Kim’s new Oracle on VMware book. Marlin is the technical editor of Don Sullivan and Kannan Mani’s soon to be published Oracle on VMware book.

My interest in drawing attention to this session is because everybody wants HA. That includes business units that a decade ago didn’t even know how to spell it. The natural evolution is everybody is going to want stretch HA. I think this is a very good presentation to get introduced to Oracle stretch HA concepts.

Over the years House of Brick has heard these environments referred to in a variety of ways from Extended RAC, Stretch RAC, and Campus RAC, to Metro RAC, and Geo RAC.

Marlin has been a professional friend of mine for two and a half years. I pulled Marlin aside when he was completely done conversing with people post-session to compliment him on the session and ask him about three concerns of mine.

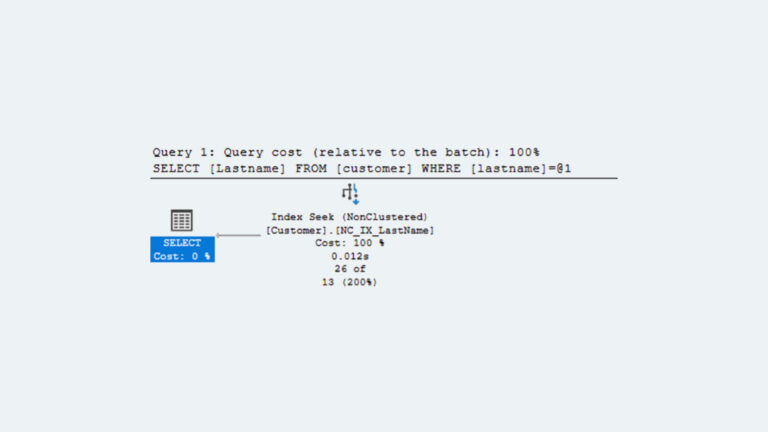

ERRATA ON RAC INTERCONNECT LATENCY AND NODE EVICTIONS

Marlin had made the point at least three times during the session that if you exceed 5 ms latency on the RAC interconnect, you are at risk for and/or will get node evictions. “Latency is the primary focus of the preso. Oracle RAC (interconnect) needs less than 5 ms RT or else you get node evictions pretty quickly.” I asked Marlin if in his field practice has he experienced that or even heard of them. He told me significant network latencies at the court customer induced IP-based storage failures, and based on those storage failures, RAC node evictions occurred. Other than that, he hadn’t experienced or heard of latency-induced RAC node evictions.

Ok, I totally get that. But that is not RAC interconnect latency over 5 ms causing any of Oracle’s four eviction mechanisms to induce RAC instance evictions. I said if memory serves, Oracle came up with the 5 ms latency requirement when co-engineering stretch RAC with EMC Corporation. I’ve been told about WAN simulation tooling tests that artificially induced RAC interconnect latencies as high as 100ms without Clusterware RAC instance evictions. That doesn’t surprise me at all. In single instance Oracle, the OLTP performance rate-limiter is ultimately redo log write performance. In OLTP RAC, the ultimate performance rate-limiter becomes RAC interconnect performance. It stands to reason Oracle would require very conservative interconnect latency numbers as a matter of policy to prevent RAC from delivering poor downstream performance.

CLARIFICATION ON ASM CPU OVERHEAD

Sudhir had said ASM has a CPU cost due to its own managing of the mirror. I noted to Marlin Oracle’s best practice for ASM is “External Redundancy” meaning the SAN deals with mirroring. When configured as such, ASM becomes a raw device mapper. The ASM instance idles with negligible CPU overhead and only comes alive for load balancing the storage stripes as often as a storage device is added or deleted. ASM’s “Normal Redundancy” setting was put in the product for the benefit of lower-end configurations to bring the server’s internal disk into disk groups.

CLARIFICATION ON DATA GUARD AND BLOCK CORRUPTION

At the end of the session, Marlin used a single sentence advocating the use of Oracle Data Guard to avoid corruption to the Oracle database. I heard my good professional friend Don Sullivan make the same argument in a session a couple years ago. Don and I debated the issue at the time.

Two facts exist: 1. House of Brick used to do Data Guard implementations for the majority of our Oracle customers ten years ago. 2. House of Brick is rarely asked to do Data Guard implementations today. Yet our customers enjoy far more DR coverage by workload count and as a percentage of the workload stack coverage than ever before. That is because more enterprises are leveraging array or host replication at a lower layer of the stack. When combined with the VMware virtual shroud around the workload, this can rocket DR’s reliability to close to 100% and that without the human expense, typo and timely synchronization risks associated with technicians running around with clipboards. That level of DR reliability was manifestly impossible in native hardware environments with Data Guard protecting the database transaction vectors only. Furthermore, there is the potential for Oracle license savings depending on how DR testing is architected.

Replication products can and should be configured to do CRC block checking on the standby end. Despite that, yes, there is still the very remote possibility that a block could be transmitted to the DR site with logical internal corruption that Oracle Data Guard would detect and replication wouldn’t. (I’ve never heard of such an occurrence out of our customer base.) Should someone be worried about it, they could leverage any number of risk management strategies:

- Simulcast both array replication with its more robust and more reliable DR, and Data Guard

- Upon switchover trial or actual switchover, allow RMAN level zero actual or VALIDATE backup to log the block in the alert log and/or V$DATABASE_BLOCK_CORRUPTION

- Use RMAN RECOVER BLOCK to repair as needed. Block repair’s been available since the days of Oracle 8.

- Provide more internal instance risk management through enabling DB_BLOCK_CHECKSUM or the heavier DB_BLOCK_CHECKING in the source instance. To do so may have no operational impact at all, especially within the chorus of House of Brick’s VBCA customers that complain they’re swimming in CPU.

- & etc.

My point is we need to be careful not to spook people. If we’re going to mention the remote risk of logical block corruption at all, we should also be careful to at least allude to Data Guard’s significantly-escalated risks when used as a stand-alone solution, as well as other methods to mitigate and manage the logical block corruption risk.

VAPP2980 VMware Global Support Services Panel – What Goes Wrong and How We Fix It – 4:00PM

This for me was the most critical session of the show. That holds, even though to my knowledge House of Brick has never needed VMware GSS services. Here is a subset of my extensive notes on this session.

Mike Matthies – Senior manager Technical Services for vCloud Air – facilitated the panel. His former responsibilities include managing the EA group and also managing the Database Management group including Oracle.

The other panelist I was particularly interested in was Matt Scott – Senior Oracle Engineer, formerly with Oracle and JD Edwards.

Matt: The team was designed in 2009 out of concern customers may be inadequately equipped to converse with Oracle Support. GSS will even open Service Requests with Oracle if needed. Oracle is very open to working with customers and GSS to resolve issues. All of Matt’s GSS database support colleagues are DBAs. They were Oracle Corporate employees that joined VMware. They are global 24×7. They all have different app backgrounds. Call your TSE or TAM with an Oracle request and you get a dedicated team looking at your issue and going to bat with Oracle. The GSS perception is when they’re also on the phone with Oracle, Oracle is much more willing to work with GSS and the customer on a conference call. Oracle owns so many apps. Sometimes it’s hard to look at a log and figure it out. Open a case with GSS and they’re normally on a webex with you within 20-30 minutes, and we normally find the issue within a few hours.

The most common Oracle problems encountered by GSS:

- vCenter issues with Oracle DB under it

- Numerous RAC set-up questions like RDM or ASM

- They get a little bit of performance on Oracle RAC

- They get a lot of issues that turn out to be storage configuration problems

GSS has seen it all from a storage and network standpoint. In many cases GSS gets copies of customers’ DBs. That DB copy transmission process from the customer to GSS is very secure and can involve shipping a GSS-provided storage device if needed. GSS reverse-engineers when necessary to solve the problem.

Matt: Most of the calls related to Oracle middleware product support. Oracle’s acquisition of BEA led to middleware changes. You gather logs and show GSS via Webex or in person (we will go on-site to the customer if needed). The middleware piece is where we get most of our push-back from Oracle. We work with our engineering department as well as Oracle’s Development.

GSS has an excellent working relationship with Oracle. This isn’t just a forced relationship due to both organizations’ contractual support collaboration obligations under TSANET. Matt receives 15-20 requests per week directly from Oracle for GSS to get involved. 98-99% of issues are resolved by the GSS team. There were only two Oracle bugs that the GSS team has ever discovered in Matt’s experience. VMware saw one or two driver issues on its side that required escalation to development.

If you just want to talk to someone about config at three in the morning, go ahead and give GSS a call because it has world-wide 24 hour teams.

GSS has done Oracle on VMware thousands of times. It’s almost a commodity. Matt is willing to sit down with your folks to discuss how low the Oracle Support risk is. A lot of customers open a preventive GSS SR before they make the production P2V switch.