by Chris Vacanti, Senior Consultant

The worst disaster recovery solution is the one you think works.

For most of us in the IT industry, disaster recovery becomes a second part-time job we have to do some time during our full-time job. In between meetings, change requests, overnight work, patching, and roll-outs, we find time for a minute or two to work on disaster recovery.

So we do what any good system administrator does – we make sure we have a solid backup plan. We ensure that our backups are running daily, and if a job fails we find a root cause and re-run the job.

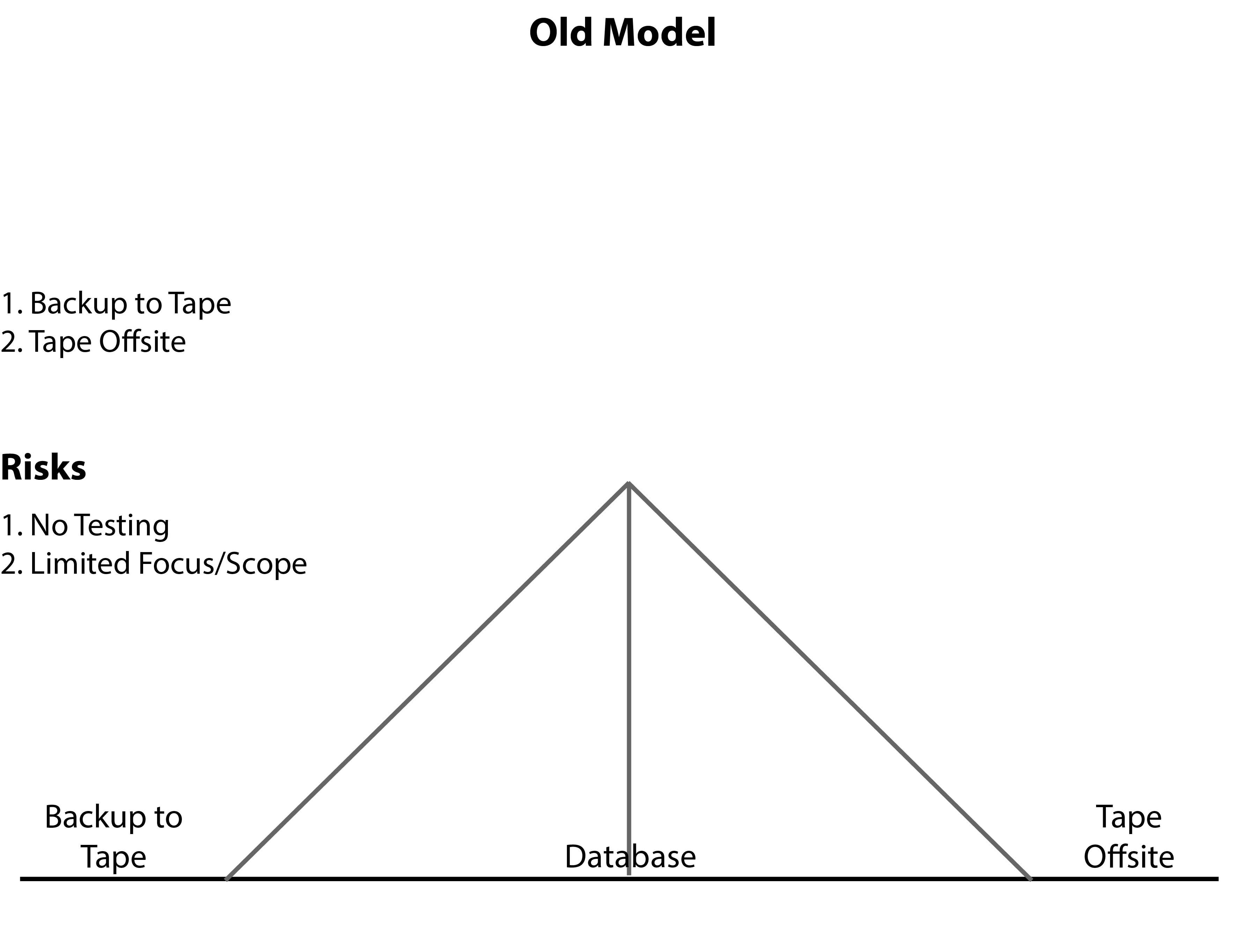

Taking it a step further, and to check off DR from the list of things to do, good sysadmins will take their data offsite. Though we know this isn’t truly a DR solution – it often ends up being the only DR solution a business has. The IT staff then tells the business that they have a DR solution. So, the business thinks the have a DR solution that works. All the while it was an untested, unplanned, and undefined solution.

This is a dangerous place to be in and of itself. The business hasn’t provided any input as to their needs. So, the solution wasn’t designed with any insight as to the scope and duration of an outage that the business could tolerate. This was DR 1.0.

Big events like hurricane Katrina brought about heightened attention to disaster recovery. Katrina exposed how flimsy many DR solutions really were. Like a tent in a hurricane, the reality is that a business’ data isn’t safe with a weak DR solution. Prior to Katrina, many businesses hadn’t given much thought to DR. Of those that did, many thought at that time that they had a good DR solution. Then reality came crashing in and it took some businesses weeks to bring their systems back online…all while losing critical revenue. Some never made it back online.

Think about this image for a second. The critical client data is represented inside tent. As with any tent, to keep it more secure, we put down guy lines. One guy line represents backing up the data to tape. The other represents taking the tapes offsite. In DR 1.0, this was a reliable solution. When other sysadmins would talk about the hours spent recovering from tape, many of us would shake our heads in agreement. We’d “been there done that”…and lived to tell about it. Suddenly, hurricane Katrina showed us how that model had to change. New compliance and auditing has compelled us to change. Increased scrutiny regarding downtime, and concerns about lost revenue, and reduced profitability have also forced the industry to evolve to a new DR standard.

Introducing DR 2.0

With that we want to introduce you to DR 2.0.

At House of Brick, we use the skills brought together through decades of DR knowledge and hundreds of client interactions to build an effective DR solution regardless of the DR solution size and scope. We’re confident that we can provide a concise, documented, planned, and tested DR solution based on a business’ specific needs and requirements.

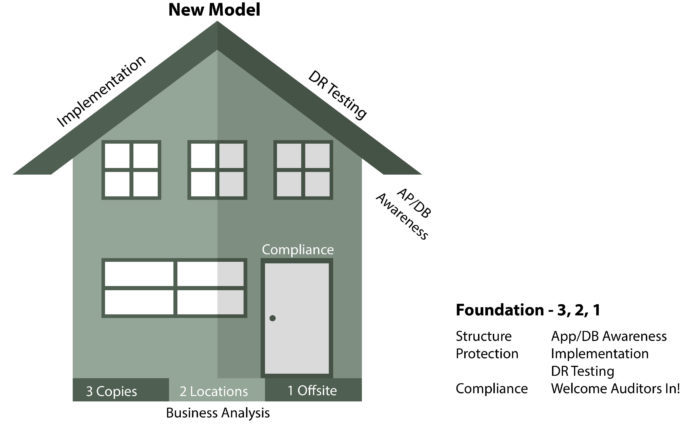

At the foundation of a good disaster recovery plan is an understanding of business requirements. This begins with a thorough analysis, interviews with the business units and operations. I like to call it the “follow the money” trail. However, equally important is the need to determine the amount of downtime (RTO) and data loss (RPO) the business can incur and still be solvent.

Once requirements are determined, IT can then begin to solve the question of how to keep the data protected. The solution will still be based off the backup strategy of three copies and two places, with one being offsite. This technical solution, coupled with the business need, becomes the foundation of a solid DR plan.

After the plan is in place, the operational walls that protect the data are implementation and testing. After implementation, most IT organizations report back to the business that their DR solution has been implemented and is solid. If any testing is done, it is usually minimal. But, with great products like SRM and Veeam, businesses can test their full DR solution in a bubble. This is as close to a production failover as one can get without the actual failover. Keep in mind that, it is imperative that IT operations determine all required servers and services that would need to be in the test bubble in order to have an effective test.

A successful implementation and test top off the DR solution and opens the door to auditing and compliance. IT leadership is then able to present tested playbooks to auditing and compliance committees. Business partners can get into the test environments and know that their data and operations are truly protected. No one has to think that their DR solution works – they know.

Engaging House of Brick

I’d invite business leaders to ask their IT staff if business operations can access their data in a test bubble during a DR test. Can they truly place an order, lookup client accounts, pull up balances, etc. during a DR test?

I’d also encourage IT operations teams to test their DR solutions. Do you have the bandwidth daily to test and validate you DR solution? Do you need help planning or implementing a concise DR solution? If either scenario applies to you, contact us – we can help.