by Jonas Mason, Principal Architect

House of Brick Consultants assess Oracle instance performance for clients large and small and often find non-default parameters that are deprecated, hidden, or undersized.

These non-default parameters can cause out of memory errors, increase disk I/O, add unnecessary processes, and distort explain plan generation.

This blog covers how to identify and remediate non-default parameters that may be problematic, and I have found that this process is useful even if you don’t change any parameters. Spfiles/pfiles are valuable company assets that should be subject to version control.

Requiring Oracle DBAs to capture, present, and justify non-default parameters to technical management is a worthwhile endeavor. Understanding the when and why behind changes to non-default parameters over time is also valuable. Managers should hear a logical narrative on why non-default parameters have been set. If the narrative doesn’t make sense, managers should be suspicious and ask more questions.

In this blog I will share examples that House of Brick consultants often find in the field, and after reading it you will have a framework to identify, analyze, and remediate issues. I generally stick with default values for a parameter unless the justification narrative is strong.

Please note: As with all system changes, test and validate the impact of any parameter change prior to production deployment.

Identifying Non-Default Oracle Parameters

Non-default Oracle parameters can be identified a number of ways:

- Non-default parameters are listed at the bottom of AWR and statspack reports.

- Creating a pfile from spfile and saving this is simple.

- The select statement below can also be run and saved to file:

select name,display_value from v$parameter where isdefault = 'FALSE' order by name;

Got Memory Constraints?

Databases that grow, get migrated and upgraded over longer periods of time (15-20 years) often inherit parameters that pass down memory constraints that were relevant long ago. These memory parameters can mix Automatic Memory Management (AMM) and Automatic Shared Memory Management (ASMM), along with other parameters, which have been deprecated in the most recent version of Oracle installed.

AMM vs. ASMM

I sometimes find that MEMORY_TARGET, MAX_MEMORY_TARGET, SGA_TARGET, and SGA_MAX_SIZE are all set for the same instance at different sizes. Decide whether you want to use AMM or ASMM and unset the parameters for the alternative when you do. If you have a large enough SGA, we recommend this be configured to use HugePages, which requires that SGA_TARGET and SGA_MAX_SIZE be set (ASMM).

Since you are reviewing these parameters, check what percentage of server memory your SGA and PGA consume for all instances. How much available memory is there on the server beyond this? If significant enough, review AWR/statspack reports for SGA and PGA target advisories during peak periods to see if increasing these allocations could result in reduced disk I/O.

db_cache_size

I have seen this parameter set to 32 MB on instances with server memory and SGA set to 10 GB or more. The recommendation would be to reset this parameter to the default of 0 and allow Oracle to manage the cache size dynamically.

db_16k_cache_size

I have also seen the 16k block cousin of db_cache_size set unreasonably low. In some applications, the indexes were placed in 16k tablespaces and this parameter was required to accommodate them. Resetting this parameter returns the value to a default of 0, but would prevent your instance from restarting. As a result, I typically set db_16k_cache_size to 20-30% of SGA size (to start) if modifying an unreasonably low value.

sort_area_size

The sort_area_size had a default of 65536 bytes in Oracle 10G, and I sometimes find this value set slightly larger, at .5 MB. While this may have been appropriate over 10 years ago given memory constraints at the time, it creates a bottleneck now. I recommend resetting this, or increasing it to accommodate sorts on expensive table joins.

shared_pool_size

Per Oracle, if SGA_TARGET is set, the default for this parameter is 0, which allows Oracle to manage this memory pool dynamically. I recommend going with the defaul

large_pool_size

Per Oracle, if SGA_Target is set, the default for this parameter is 0. Again, this allows Oracle to manage memory dynamically.

log_buffer

I have seen this set parameter set artificially low. Reset it to go with Oracle defaults unless you can articulate why you require the lower setting.

Got Too Many Processes?

DB Writer Processes

The number of DB Writer processes specified should be based on peak block change rates, write rates, and I/O characteristics. If datafiles are not being changed at a high rate, and you are unsure of the multi threaded I/O characteristics of your server, question why this may be set at anything other than it’s default which is 1 or CPU_COUNT/8.

Log Writer Processes

I have seen 10 log writer processes specified for instances that have very little redo log activity. There is no point creating additional processes on a server when these address a bottleneck that doesn’t exist. As with DB Writer Processes, depending on I/O constraints, additional processes could compound performance problems.

Do you really know Oracle’s Optimizer better than Oracle?

HOB consultants often find parameters implemented that influence the Optimizer’s generation of explain plans. Sometimes Oracle Support recommends these changes. You should confirm if setting those parameters still applies to your current version, and eliminate them if not. Below is a sample of some of those parameters.

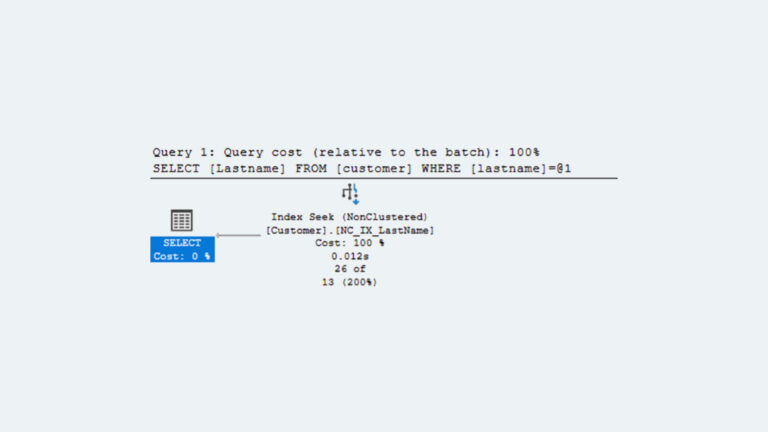

OPTIMIZER_INDEX_COST_ADJ

Per Oracle, “OPTIMIZER_INDEX_COST_ADJ lets you tune optimizer behavior for access path selection to be more or less index friendly—that is, to make the optimizer more or less prone to selecting an index access path over a full table scan.

The default for this parameter is 100 percent, at which the optimizer evaluates index access paths at the regular cost. Any other value makes the optimizer evaluate the access path at that percentage of the regular cost. For example, a setting of 50 makes the index access path look half as expensive as normal.”[1]

Full table scans are not necessarily bad if indeed most/all records must be evaluated to satisfy a query. Forcing the optimizer to use indexes when a full table scan is more appropriate, can add to explain plan cost. Why attempt to force both a read on an index and a table when a full table scan is more efficient? Unless a case can be clearly made for deviating from the default, defer to Oracle on this.

OPTIMIZER_INDEX_CACHING

Per Oracle, “OPTIMIZER_INDEX_CACHING lets you adjust the behavior of cost-based optimization to favor nested loops joins and IN-list iterators. Setting this parameter to a higher value makes nested loops joins and IN-list iterators look less expensive to the optimizer.”[2]

The default of 0 is recommended.

Hidden parameters

Were the hidden parameters in your instance implemented at the direction of Oracle Support? Are those hidden parameters still relevant if you have upgraded Oracle since that time?

I recently reviewed an instance with over 150 hidden parameters implemented. The client did not recall implementing these parameters and when I questioned the need for them before an upgrade, they reached out to their software vendor to validate. It turned out the vendor had never asked for these parameters to be modified and urged their client to drop them, unless these hidden parameters had been recommended by Oracle Support. The client had no record of being directed to implement these parameters, so we dropped the hidden parameters and vetted the changes in the QA process.

The impact of these hidden parameters on performance was not entirely clear or measurable; however, the production instance was returned to a state that matched what the vendor recommended. Having the optimizer generate explain plans in the way the vendor expects them facilitates vendor support and troubleshooting efforts.

Conclusion

Performing this inventory exercise is not difficult and can help clear up anomalies in your environment that may be hobbling performance. This framework should facilitate a productive internal conversation that either results in change or no change. If no parameter changes result, the business, tech managers, and DBAs can rest assured that they completed a due diligence review. If parameter changes result, your systems could avoid potential performance issues and outages, as well as contain or reduce hardware and software costs.

[1] https://docs.oracle.com/cd/B19306_01/server.102/b14237/initparams144.htm#REFRN10143

[2] https://docs.oracle.com/cd/B19306_01/server.102/b14237/initparams143.htm#REFRN10142