Mike Stone, CIO & Principal Architect

DR Considerations

Where’s the easy button for DR? There are a number of products promising this, but the problem is that’s that it’s impossible to truly deliver unless your entire infrastructure is virtualized according to someone else’s idea of how you should run things. In carefully constructed circumstances, some vendors can demonstrate DR in a general case. Oracle falls into this category with their latest line of offerings. However, they have extended the general case a little bit with their own scripting. VBlocks can also similarly match that functionality, but notably missing are the Oracle-specific customizations.

However, customers quickly realize that these carefully constructed demos remove many of the infrastructure tuning and customization options. In fact, an implementation that deviates in any way from the vendor-recommended configuration will probably need review and customization in order to function properly at an alternate site. Enter vendor and/or third-party consultants to help customers with a more traditional DR evaluation exercise.

At House of Brick, we have helped customers through many of these types of exercises with a variety of packaged and custom applications over the years. The good news is that technology is getting better when it comes to creating a more virtual infrastructure. The bad news is that this progress typically comes with a significant price tag.

This article starts with the lowest common denominator for those of us with Oracle databases in our technology list – the Oracle database software stack. It also starts with the assumption that the host is going to have a different IP address depending on which site it is running from.

Storage – There are many interesting possibilities for replicating database storage over long distances. Although there are product-specific solutions, such as Data Guard, for replicating a database. However, incorporating technologies from multiple vendors into any comprehensive DR plan, automatically increases the complexity. Other benefits aside, we recommend considering options from your storage vendor that allow for logical consistency to be maintained between two sites. These offerings have been shown to adequately protect all common RDBMS technologies. As a customer recently put it, “If we trust { our SAN vendor} to maintain consistent data locally, and they advertise the capability to maintain that consistency across enclosures and over arbitrary distances, then we should at least consider trusting them to do it for us”. At HoB, our incumbent position is generally to push a capability as far down into the stack as possible. In the case of replication, that is the storage appliance itself.

Network – As I already mentioned, for this article, we are assuming that the IP address has to change between sites. But there are definitely networking options by Cisco and other vendors that alleviate that assumption and thus the complexity we are addressing. At HoB, we like these “stretch” or “flexible” networking technologies, but implementing them can be costly both in terms of human resources to upgrade and modify your network infrastructure and in terms of licensing. Having said that, this is definitely a critical component of any true easy button for DR.

Tooling – It is very beneficial to have a tool that can automate some of the complexity described above. This can be done more easily for virtual machines than for physical. Oracle Virtual Machine (OVM), in conjunction with Oracle Cloud and other ‘Exa’ options give the Oracle customer many considerations. VMware and Microsoft also have some compelling options.

Oracle RDBMS Software

Ignoring how we got here, the next big question is, “How do I reconfigure my Oracle database workload to be fully functional in this new environment after its IP address changes?”

Host Considerations

Any hard-coded IP or subnet information is likely going to need to be changed. Some common examples are:

/etc/hosts, /etc/hosts.allow, /etc/sysconfig/iptables, and /etc/sysconfig/network. In addition we may need to update SSH known hosts, both in users’ home directories as well as the global configuration in /etc/ssh.

These types of changes are typically easy to configure and automate. In our experience, iptables (firewall) is the only one that is likely to require a restart.

Single-Instance

The only component of single-instance RDBMS where the IP address of the server should be a concern is the Oracle listener. In the good old days, we got a default listener configuration that told the listener to listen on all network interfaces and nobody cared if the IP address changed. Today, however, security concerns have taken over and we typically end up with a listener configuration that specifies a particular interface, or interfaces (by IP address) to listen on.

The good news is that we can typically just update this file and restart the listener. This is also a fairly easy process to automate.

Oracle Cluster Ready Services (CRS) aka Grid Infrastructure

Here’s where things start to get wrapped around the axel for a DR exercise. Oracle CRS has traditionally been very finicky and somewhat complicated to reconfigure. However, in 11g and particularly 11gR2, Oracle has made strides in simplifying things a bit.

The first issue is that if the hostname also changes along with the IP, that change has implications for the Oracle software inventory of clustered Oracle homes. But even this can be managed. The simplest way to manage this is to “detach” the Oracle Home from the inventory and follow the Oracle documentation for cloning an Oracle Home. This will apply post-installer customizations that may be impacted by a host change and once again “attach” the Oracle Home to the inventory. Repeat this process for all clustered homes on all cluster nodes.

Next we have the cluster private interconnect. There is rarely a reason to change this since it is typically not routed and only required for intra-node communications related to the cluster itself. So, we’ll assume for now this hasn’t changed.

All that brings us to the heart of the problem and the reason for this article. What do I need to change when my primary (public) IP address must be changed either for DR or other reasons?

In the simplest case where only the public IP has changed, the only components that are impacted are the Cluster database listeners, the host VIPs, and the SCAN. The following is a sample of the commands required to correct these items. The commands below can be run from any active node in the cluster.

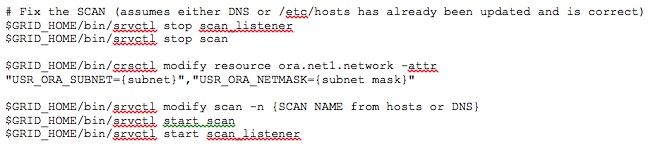

The following commands are required to update the SCAN. It is notably lacking specifics because the tool picks up the information from its environment (such as /etc/hosts or DNS):

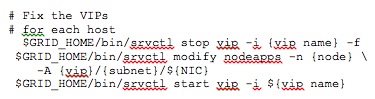

And, that leave’s our VIPs, which can be addressed as follows:

These commands only take a few seconds and will complete the reconfiguration of your clusterware environment.

Conclusion

As I mentioned at the beginning, there are a number of complicating factors, including the requirement to also change the hostname. With Grid Infrastructure, ASM is also a consideration. Hopefully you’ve put your CRS registry and voting disks in ASM, but regardless there are possible issues here to address.

Through extensive testing and repetition across the last few versions, we have perfected a series of steps that take the general case and assume the worst. The good news is they’re fairly complete and repeatable. The bad news is, it can take 20 to 30 minutes to complete and reconfigure everything from the ground up. These steps have been automated and are fairly easy to deploy. But I’ll leave that for another day.

Hopefully, yours is the simplest case above and this has been a useful overview that will help you along the path of cracking this nut for yourself. If not, feel free to contact us and we’ll be happy to discuss ways in which we might be able to help.