House of Brick Principal Architect

Welcome to the fifth part in our series of posts regarding the virtualization of your business critical SQL Servers. I discuss high availability with SQL Server on VMware, and this will include SQL Server clustering and SQL Server 2012 AlwaysOn. I had intended this post to conclude the series with Disaster Recovery, but have decided to wrap that topic into a sixth part to this series so I do not take too much of your time on this post.

High Availability and Disaster Recovery Options

To start the discussion, let’s start with two definitions.

High Availability (HA) is a design approach where systems are architected to meet a predetermined level of operational uptime, or a Service Level Agreement (SLA). This means systems should have appropriate levels of redundancy but keep the environment as simple as possible as to not introduce more failure points.

Disaster Recovery (DR) is the process of preparing for, and recovering from, a technology infrastructure failure that is critical to a business. The core metrics are the Recovery Point Objective (RPO) and Recovery Time Objective (RTO). The RPO is a measurement of the maximum time window where data could be lost in the event of a disaster. The RTO is the measurement of the maximum time that a core service or system can be offline after a disaster.

High availability is not disaster recovery, and disaster recovery is not high availability. Do not confuse the two, and do not assume that a solution for one is the same solution for the other.

To Cluster, or not to Cluster

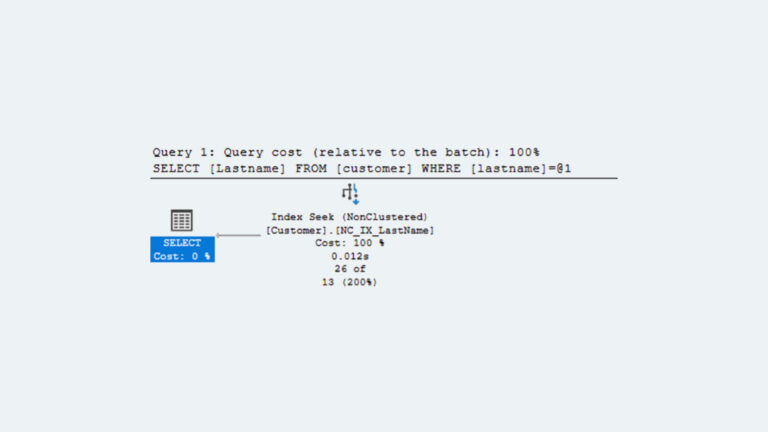

Whenever the topic of HA for SQL Server comes up, people always gravitate towards Microsoft Failover Clustering (MFC) of SQL Server instances. In some situations in the physical word, I feel that clustering is a very viable solution. Other solutions exist – database mirroring, log shipping, or transactional replication, to name a few. Once you introduce SQL Server on top of VMware vSphere, your HA options grow, and I feel that clustering is actually NEEDED far less than people think.

Whenever I talk over the scenarios with clients, I always start with a handful of questions to get people talking.

Q: Does this SQL Server have less than a four-minute SLA for unplanned outages?

If the client answers “yes” to this question, MFC could be a good solution. However, it is not always the right solution. I will continue on after this response. However, if the client answers “no”, the remaining questions might be irrelevant.

Q: Do your administrators currently utilize a rolling maintenance strategy with existing clusters?

If a rolling maintenance strategy is not currently used, then planned outages will still drag on and possibly interrupt established SLAs.

Q: Is the application or middle-tier cluster-aware?

By asking this question, I am asking if the application has been coded to understand if the pause in SQL Server responses due to a cluster-node failover, and simply wait for the second node to begin responding. It should not return errors during the failover period. If the application cannot handle this task, you are guaranteed experience application downtime or errors during the time period the cluster is failing over. This window could be as large as three minutes, depending on the speed of the failover and SQL Server starting up on the second node.

Q: Does the appropriate level of technical expertise exist to support clustering?

This question bothers some folks, but it needs to be asked. The truth of the matter is that in a large percentage of the clustered environments that I encounter, the SQL Servers experience greater amounts of instability and unplanned downtime than if the SQL Server were just a standalone instance without being clustered. It sounds counter-intuitive, but if clustering is not installed, configured, and managed appropriately, the complexity of the environment will usually lead to problems that manifest in outages. A single-instance SQL Server is nowhere near as complex as a clustered SQL Server, and therefore the simplicity of the environment contributes to its stability.

Weigh the responses to these questions carefully.

You have to decide if the extra complexity of MFC will truly help the organization, do nothing at all, or worst of all, hurt it. By hurting it, I do mean substantial impacts to the day-to-day operations of your business.

What are my alternatives to MFC?

I start this deep dive with alternatives to MFC. I start with alternatives before tackling MFC itself for a simple reason: I think that a large number of organizations that I encounter are unnecessarily using clustering, and that it over complicates their environment without merit. A number of solutions exist that can offer equal or– in some situations – greater levels of HA than clustering. These solutions include using just a single instance of SQL Server on VMware VM with VMware High Availability (HA) enabled, SQL Server mirroring, SQL Server 2012 AlwaysOn, and old-school transactional replication or log shipping.

Single Instance SQL Server on VMware

A large number of the environments that I work with are fairly simple and have established adequately sized maintenance windows for routine items such as patching. An unplanned outage that lasts only a few minutes causes only a mild inconvenience for the business. In these environments, installing a single instance of SQL Server on a VM and enabling VMware HA on the VMware cluster will accommodate the business requirements for HA without inducing ANY unnecessary complexity. This option is as simple as it gets. Simply enable High Availability within the VMware vSphere cluster that the virtual machine resides in. If a physical host fails, any VMs that were running on that host get remapped onto running hosts and are automatically restarted. The average start-to-finish time of this operation averages around 2m 45s, depending on the amount of data that must go through crash recovery.

Enable the VM monitoring option and if the operating system goes unresponsive, VMware can detect it through a VMware Tools heartbeat setting, and will restart a VM after two minutes (configurable) if no CPU or I/O activity is detected for that time window.

Of course, maintenance will result in an outage, but when a maintenance window is already established, is this really a negative attribute?

Pros:

Least complex.

Simple to manage.

Single copy of data.

Cons:

Outage from host failures or maintenance operations.

SQL Server Mirroring

SQL Server mirroring, both synchronous and asynchronous, present a relatively simple configuration for better HA. Mirroring requires two VMs (three if a witness server is utilized), and each is configured in a nearly identical manner. Databases are configured for either synchronous or asynchronous mirroring individually. VMware HA can assist if a host fails by restarting the now secondary node on a different host. The secondary node fires up and then re-syncs to the new primary. The average failover time in our experience is around 45s.

Some cons to mirroring. Asynchronous mirroring requires SQL Server Enterprise edition. Mirroring is performed on a database level, so what happens if I am mirroring multiple databases for a single application (ex: Sharepoint) and only one of the databases fails over for some reason? You’ve now got a problem. Also, the application must support mirroring. Newer technologies like .NET (if proper database connectivity standards are used) or ODBC with a modern SQL Server driver can handle the failover without problem. Older technologies, however, could have a problem. The issue lies in how the client is responsible for being aware of both the primary and secondary node IP addresses, and performing the appropriate failover in the application. Some legacy applications simply cannot do this. The solution is to migrate to SQL Server 2012 AlwaysOn.

Pros:

Relatively simple to install, configure, and manage.

Availability Groups handle multiple database failovers.

Very little downtime during failover.

Easy fail-back.

Cons:

Double the space on a SAN required.

Limited to two nodes.

Reading data from secondary node is a painful process.

Database failover is per individual database.

Might not be supported by some legacy applications.

Deprecated in SQL Server 2012 in favor of AlwaysOn.

SQL Server 2012 AlwaysOn

I have talked here about the benefits of SQL Server 2012 AlwaysOn before. The combination of the virtual IP from the no-shared-disk MFC configuration and the improved mirroring configuration lends itself beautifully for placement on VMware. You can have two synchronously mirrored nodes or four asynchronously mirrored nodes, and each can be opened up to read-only activity (but check your licensing first). Databases can be bundled into Availability Groups so that the group fails over together.

This technology works beautifully for HA operations. My experience is that an unplanned failover still only takes 45s on average.

Pros:

Relatively easy to setup and manage.

Solves most cons of traditional mirroring.

Allow read-only activity on secondary nodes.

Quick failover times.

Simple fail-back.

Cons:

Adjusted licensing model.

Need at least twice the space for data and replicas.

Transactional Replication or Log Shipping

Traditional replication and log shipping both are both traditional ways to replicate data and changes from one server to another. However, both are not great means for HA with a low RPO. They are somewhat complicated to implement and manage. They do not guarantee full database data replication. No automatic failover method exists, and fail-back is even more difficult.

However, in some environments, these forms of HA are suitable. It does only replicate the data you are actually interested in, which can reduce your bandwidth consumption. There is no wait time at the secondary node during a failover.

Weigh your options wisely and select the technology appropriate for your environment.

Pros:

Scale-out technology that can be used for HA.

Replicate the data that you care about, not the entire database.

Cons:

Complex to implement and manage.

No built-in automatic failover.

Difficult fail-back.

Clustering on VMware

Do any of the aforementioned alternatives mean I cannot execute Microsoft Failover Clustering on VMware? Absolutely not. We have done this in a number of clients, and every time, it was the most appropriate means for executing High Availability for their situation. Many scenarios demand MFC, and clustering on VMware is fully supported. In fact, just last week VMware released an updated MFC on VMware guide called ‘Setup for Failover Clustering and Microsoft Cluster Service’ for vSphere 5.1. Up to five nodes are now supported. VMware HA can still be used to ensure that VMs on a failed node are restarted on healthy nodes, which solves the single-point-of-failure issues that accompany a lengthy cluster node outage.

The tricky part comes with the architecture around the shared-disk configuration required by the clustering setup. The use of vMotion and DRS are prohibited. For shared disk configurations spanning hosts (I can’t image why anyone would build a cluster-in-a-box except to play with), either fiber channel RDMs or in-guest iSCSI presentation of the shared cluster disks is required. Failover times can span between a minute and up to five, depending on the infrastructure.

Pros:

Fully supported.

Only space for one copy of data required.

Single IP address for applications to be aware of.

Cons:

Complex to manage without inducing greater downtime than standalone instance.

Still have potentially lengthy downtime during failover.

Conclusion

This portion of the VBCA series is to inform you of all of the HA options available to you when you place your business-critical SQL Servers on the VMware platform. Many options are available, and at least one should work well for your organization and workloads.

In the final part of this series, I will discuss Disaster Recovery options with SQL Server on VMware. The remainder of the series presents the challenge behind Business Critical virtualization as it becomes organizational, where people require the most focused effort to help change their minds. I close with a discussion of techniques used to help demonstrate the power of virtualization and discuss the benefits to those individuals that might continue to fear or not understand virtualization.